In the last few months I have worked really hard to put together a introductory course in data coding for those who are new to Data Science. I’ve selected bash (aka the command line) as the first data language to show you, because I find it easy to interpret – even for first timers. In my articles I’ve started “the story” from the very beginning, so if you have never touched coding/programming before, don’t worry; you will still understand everything. My main focus was to keep everything easy to follow, but also practical and hands-on.

If you go through these 7 articles, you will learn how to do basic data cleaning, data formatting and analytics via the command line. On the top of that you will have your own data server to practice – we will use that not only here, but in my future SQL and Python tutorials too.

Note: if you are new to data science, read the data analytics basics first!

Here are the 7 articles!

Need a free Bash Cheat Sheet first?

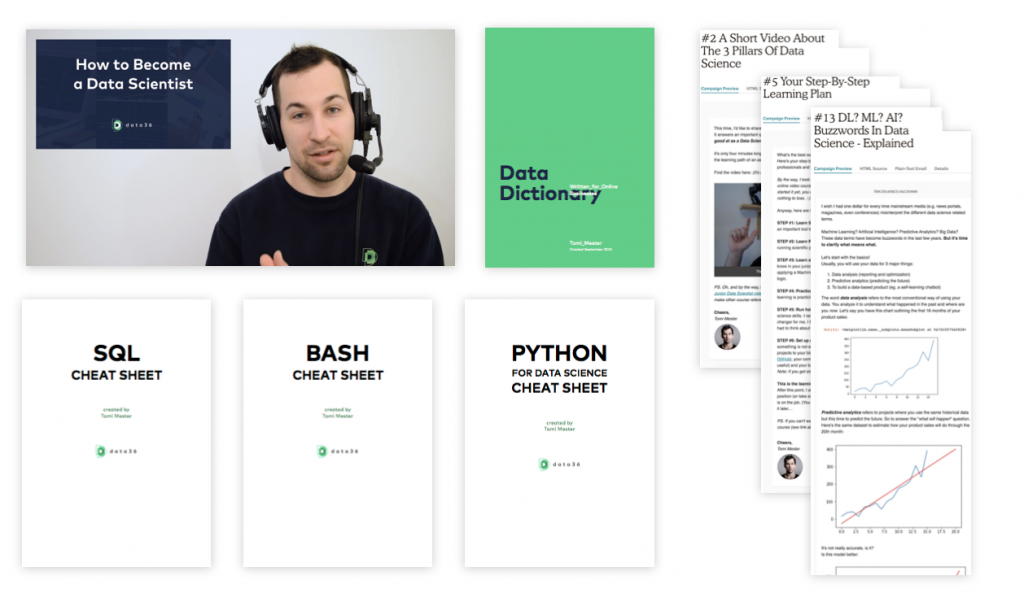

Get my free Bash Cheat Sheet, plus:

- SQL cheat sheet

- Python cheat sheet

- How to become a data scientist? (50-minute free video course)

- 6-month email drip (that walks you everything step by step)

- and more…

In my FREE MATERIALS section. Here.

1) Data Coding 101 – How to install Python, SQL, R and Bash (for non-devs)

Step 0 is creating your data environment. In this tutorial I’ll show you how to do that step by step – and as a result you will have your own data infrastructure with bash, python, R and SQL. Plus you will get access to famous tools like iPython, Jupyter, RStudio, and pgadmin4. All of these are free. READ>>

2) Data Coding 101 – Introduction to Bash – ep1

The first of my bash-specific tutorials covers the basic “orientation” commands (how to create directories, how to change directories, how to move files, how to download files, etc.), some basic data sampling tools (such as head and tail) and the word count tool. READ>>

3) Data Coding 101 – Introduction to Bash – ep2

In the second episode I introduce 3 major concepts in the command line: options, pipe and print-in-a-file. I’ll also show you the grep command, which is a widely used filter tool in bash. READ>>

4) Data Coding 101 – Introduction To Bash – ep3

This chapter gets closer to applied statistics as we perform our own Median, Max and Min calculations in a 7M+ rows data file. The tools we are going to learn for this are the sorting and unique commands! READ>>

5) Data Coding 101 – Introduction To Bash – ep4 (with video)

Here I’ll show you some best practices to speed up your daily work at the command line. 9 tricks – and if you don’t like to read, I’m glad to tell you that this was my first article that came with a full video tutorial as well. (Find it in the article.) READ>>

6) Data Coding 101 – Introduction To Bash – ep5

The next step of bash is to learn the control flows such as if-then-else statements and while loops. You will place these into scripts and on the way you will learn how to use bash variables as well. Plus I’ll show you a little script to prank your friends with fake wifi password hacking… READ>>

7) Data Coding 101 – Introduction to Bash – ep6 (last episode)

In this closing episode I’ll give you a brief introduction to 4 more command line tools: sed, awk, join and date. All these will help you format and clean your data to be more flexible on your data science/analytics projects in the future! READ>>

The Junior Data Scientist's First Month

A 100% practical online course. A 6-week simulation of being a junior data scientist at a true-to-life startup.

“Solving real problems, getting real experience – just like in a real data science job.”

**********

By the end of this series you will be ready to start your own pet project and sharpen your skills via learning-by-doing!

Check the Python and the SQL tutorials, too!

- If you want to learn more about how to become a data scientist, take my 50-minute video course: How to Become a Data Scientist. (It’s free!)

- Also check out my 6-week online course: The Junior Data Scientist’s First Month video course.

Cheers,

Tomi Mester

Cheers,

Tomi Mester